Why Lighthouse scores can be misleading

You've probably seen them on social media. People proudly posting a green Lighthouse Performance Score, also known as the Google PageSpeed Insights Score. These people are aware that good web performance of their website is relevant to website visitors. They put a lot of effort into improving the site themselves or together with a team of stakeholders to achieve such a great result.They know a better performance provides users a better experience, making it easier for users to achieve their goals on the website, and that this user behavior also contributes to the website's business goals.But what they probably don't know is that this Lighthouse Performance Score is not the appropriate way to assess whether your website is performing well in terms of loading speed, responsiveness and visual stability.Read this article to find out why you should not use the Lighthouse Performance Score, which alternative is better suited as a solution, and details of exactly how this solution works.

What exactly is the PageSpeed Score? #

The PageSpeed Score represents an evaluation conducted in controlled laboratory settings using synthetic tests performed by automated tools like Google's PageSpeed Insights or Lighthouse. These tests simulate web browsing scenarios under specific predefined conditions.

This type of testing, we call it synthetic testing, is used to collect lab data on how a potential user of the site, i.e. not a real user, will perceive the website in terms of web performance.

Use of synthetic testing offers a number of advantages:

- Allows for consistent benchmarking between websites as it eliminates variables associated with real-world usage (e.g., device capabilities, network speed).

- Helps identify technical issues affecting performance that can be resolved through code optimisation techniques.

- Provides actionable recommendations to improve page loading speed and overall performance.

For many people, these insights are very practical and understandable and when you apply the recommendations within your website you can immediately check whether this produces the desired result.

However, collecting this type of lab data also has its limitations:

- Does not consider variations in real-world usage patterns or diverse user contexts.

- Might not accurately reflect how users actually perceive site speed or usability.

- Only collects data from an initial page load. User interactions to measure post-load responsiveness cannot be measured.

- Tests are often conducted against cookie walls where website performance is measured without taking into account third-party resources that may be blocked by the cookie wall.

A Google PageSpeed user never visits your website #

The biggest shortcoming of synthetic testing is that you cannot assess whether users of your site are having a good experience. But unfortunately that is how it is used by countless people who understand the importance of good web performance.

It makes a significant difference to web performance whether a user visits your website at home via Wi-Fi or wired, or whether a user is on the go. A home network is generally stable, and a desktop or laptop is a powerful device. However, mobile networks are variable in terms of performance, despite the 4G or 5G connection, this experience cannot always be guaranteed due to various factors. There is a difference in bandwidth, and latency in a mobile network is much higher than what is experienced in the network at home or at the office.

State of mind also affects how these users perceive speed; a user on the go may be in a bit of a hurry, while a user at home has all the time in the world. And the device used is also decisive, there is a very large spectrum of mobile devices in use that all perform differently, and this also applies to your site visitors.

Google PageSpeed Insights does not take this into account. There are two types of users, a mobile user and a desktop user, each with specific characteristics. Chances are that exactly this type of user is not part of your user base and never visits your site.

Which measuring method is suitable? #

Using field data based on Real User Monitoring (RUM) is the proper way to assess whether your website is performing well in terms of loading speed, responsiveness and visual stability.

Field data can be collected with specialised tools, fortunately Google also provides us with field data with its Chrome UX Report (CrUX). Although this data is delayed by two days, and the effect of changes or optimisations is only visible after 28 days, it is a good fit. And it takes away the limitations of lab data:

- Reflects the real-world experience of users across various devices, network conditions, and geographical locations.

- Provides insights into how actual visitors perceive the performance of a website using (Core) Web Vitals, which also includes user interactions (initial- and post-load measurements)

- Potential false sense of security: Relying solely on synthetic tests may lead developers or testers to believe that their application is functioning optimally, when in realistic usage patterns it may still have undiscovered problems.

Field data is based on real users and provides a realistic view of how performance is perceived. It has some limitations in that it requires significant traffic to generate statistically reliable results. Google CrUX requires a certain level of traffic to collect and provide meaningful data. If your website or URL has low traffic, it might not meet the threshold required for data collection. If your website recently launched or underwent significant changes, it may take some time for Google CrUX to gather enough data about user experiences on your site. The Chrome User Experience Report (CrUX) is also limited to Chrome users and it only measures a subset of those users who have opted in to share CrUX data when the browser was installed.

Like lab data, measurements can change due to modifications within the site, but also due to changes among site visitors, such as a change in devices, networks or browsers they use. Unlike lab measurements, these are factors that are not static, but dynamic just like in real life.

But field data does not give me a score, now what? #

We know that marketers are fond of scores. Scores provide a standardized way to evaluate the success or effectiveness of marketing efforts, and in the case of the Google PageSpeed Score, web performance efforts. Scores help marketers assess performance, track progress, and compare different web performance tactics or strategies.

Additionally, scores make it easier to communicate results and findings to stakeholders within an organisation. Scores are understood without having to know all the technical details about web performance metrics or (Core) Web Vitals. Marketers (or other stakeholders) concerned with web performance can present scores in a clear and concise way that is easily understood by executives, managers or operational staff who may not be directly involved in web performance activities.

Scores also enable benchmarking against competitors or industry standards. By comparing their scores with others in the same market segment, marketers can gain insights into areas where improvement is needed or identify opportunities for differentiation.

Ultimately, using scores helps marketers streamline their analysis process and provides a common language for discussing web performance across teams and organisations.

Get more business out of your website

Find out how much slow pages are costing you, and what to fix first. Takes 30 seconds, no commitment.

Introducing the UX Score #

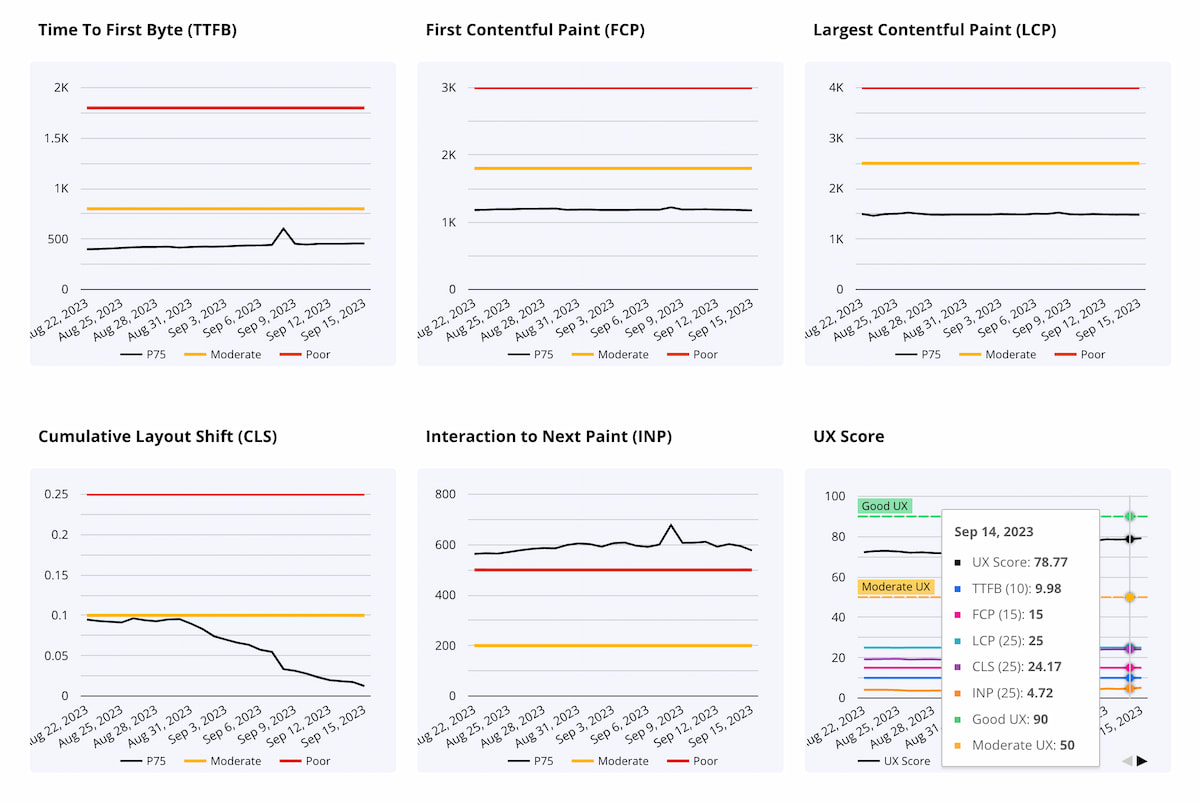

With Google’s (Core) Web Vitals we have metrics to evaluate the user experience, with each of the metrics assessing a different UX aspect that contributes to the overall user experience of a page, template or website.

For each metric we also have evaluation criteria, Core Web Vitals includes target thresholds for each metric, which helps to understand whether the experience is "good", "needs improvement", or is "poor".

An evaluation method is also already available, Google collects user experiences with Google Chrome and Web Vitals, aggregated and anonymised Chrome metrics data is available through sources such as Google BigQuery, the CrUX API and various web-based tools.

The next step which requires some effort and discussion is to develop a scoring system that assigns values or weights to the different evaluation criteria based on their importance in contributing for an optimal user experience.

Now we have a UX Score that helps us evaluate the success or effectiveness of web performance with our clients. But above all, this score is understood by a variety of stakeholders, and most importantly, it allows us to assess whether a client's page, template or website or its competitors are performing well in terms of loading speed, responsiveness and visual stability. Simply because the score is based on experiences of real users.

Breaking down the UX Score #

There are of course different ways to set up a scoring system. The approach we have chosen is no secret and we are happy to share it with you. We even hope that you are now convinced why a UX Score based on real user data is a better option than the Google Lighthouse Score, and that you are also considering using this UX Score, or even provide input that might contribute to a better calculation of the UX Score.

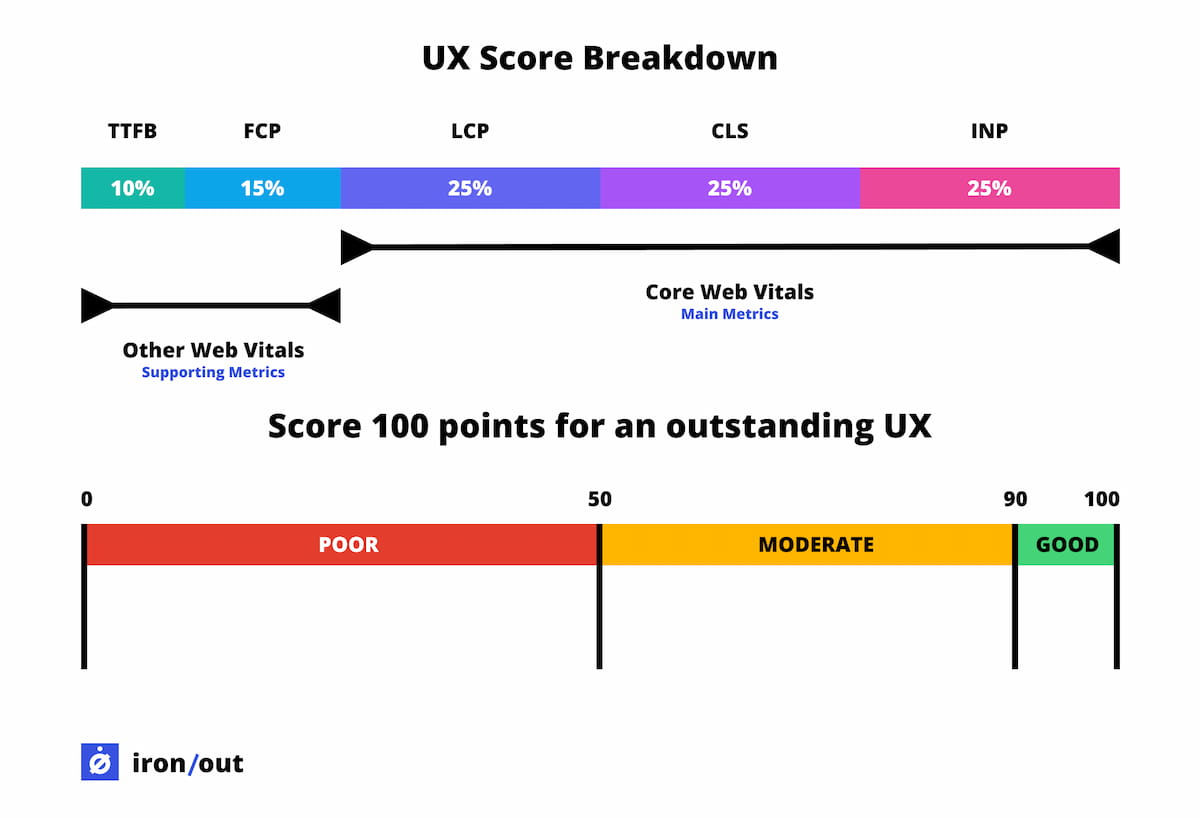

Our scoring system starts with a base calculation. We start by giving each Web Vital metric a score. This is based on its current performance and how it fares between its minimum and maximum limits (based on the target thresholds for each metric). However, regardless of how good or bad it performs, the score stays between 0 to 100 points.

Next, we apply a factoring in weights. This is based on the Core Web Vitals (main metrics): Largest Contentful Paint (LCP), Cumulative Layout Shift (CLS), and Interaction to Next Paint (INP) play central roles, each contributing 25 points. As you can see, we have already said goodbye to First Input Delay (FID), we use Interaction to Next Paint (INP) to assess page responsiveness and calculate its share of the score. The supporting metrics are assigned a different weighting, Time to First Byte (TTFB) contributes 10 points, while First Contentful Paint (FCP) pitches in 15 points.

Just like the PageSpeed Score, you are aiming for 100 points. A total score of 100 indicates excellence across loading speed, visual stability, and responsiveness. This high score assures users that the site's server reacts swiftly (TTFB) and displays initial content promptly (FCP) when loading.

Since a score of 90 or higher indicates that the page’s user experience is perceived as “good,” it makes sense to prioritise core metrics. Core Web Vitals are essential for delivering a seamless user experience, as they reflect aspects users directly notice. This focus should encourage the creation of an experience that is optimal across all key UX elements with just a bit more effort.

Final thoughts #

We hope to gather feedback from you on our UX scoring methodology, the evaluation process and hopefully, its successful use. This feedback will help us to continuously refine and improve our approach. Normally, developing a UX score is an ongoing process as user expectations evolve and new technologies emerge. By regularly updating the evaluation criteria and adapting the scoring system accordingly, it remains relevant for accurate assessment of the user experience. Fortunately, the heavy lifting for this is done by Google with its use of (Core) Web Vitals and all that comes with it. We probably only need to tweak the scoring system in case of adjustments.

In an ideal situation, Google PageSpeed Insights should not give a score based solely on synthetic testing but rather take into account real user monitoring (RUM) data. By incorporating RUM data into the scoring mechanism, PageSpeed Insights can provide insights into how a website or application performs in real-world scenarios. This ensures that developers have a clearer understanding of their site's performance for actual users, rather than relying solely on laboratory-based synthetic tests. This holistic approach provides a more comprehensive evaluation that aligns better with real-world usage scenarios.

Should you already want to get a feel for what UX Score your pages are delivering, try https://pagespeed.compare which uses field data (CrUX) to calculate the UX Score discussed above.